As the accessibility of music slowly becomes ubiquitous through technology, traditional music production is evolving with the introduction of artificial intelligence. Even though Artificial intelligence is able to learn and can make parts of manual audio engineering dispensable, it still lacks the creative human touch. //By Sebastian Lesch

Artificial intelligence (AI) has been placing roots in various sectors, from elaborate translation-tools to evolving personalization-algorithms in social media. Likewise, the industry around music-production is finding applications for AI-based technologies, taking on tasks that usually require skilled and creative professionals. Currently, available AI-driven products for music production exist for a broad range of applications from musical composition to mixing-assistance, and all the way to audio-finalization in mastering.

A popular example is the online platform Landr. It offers an automated tool that is able to master single audio files within minutes based on machine learning. As of June this year, the company introduced a feature called album-mastering. Users can now upload a sequence of songs with the goal of becoming a coherent sounding end product. This is a key step of the mastering process that used to require manual work.

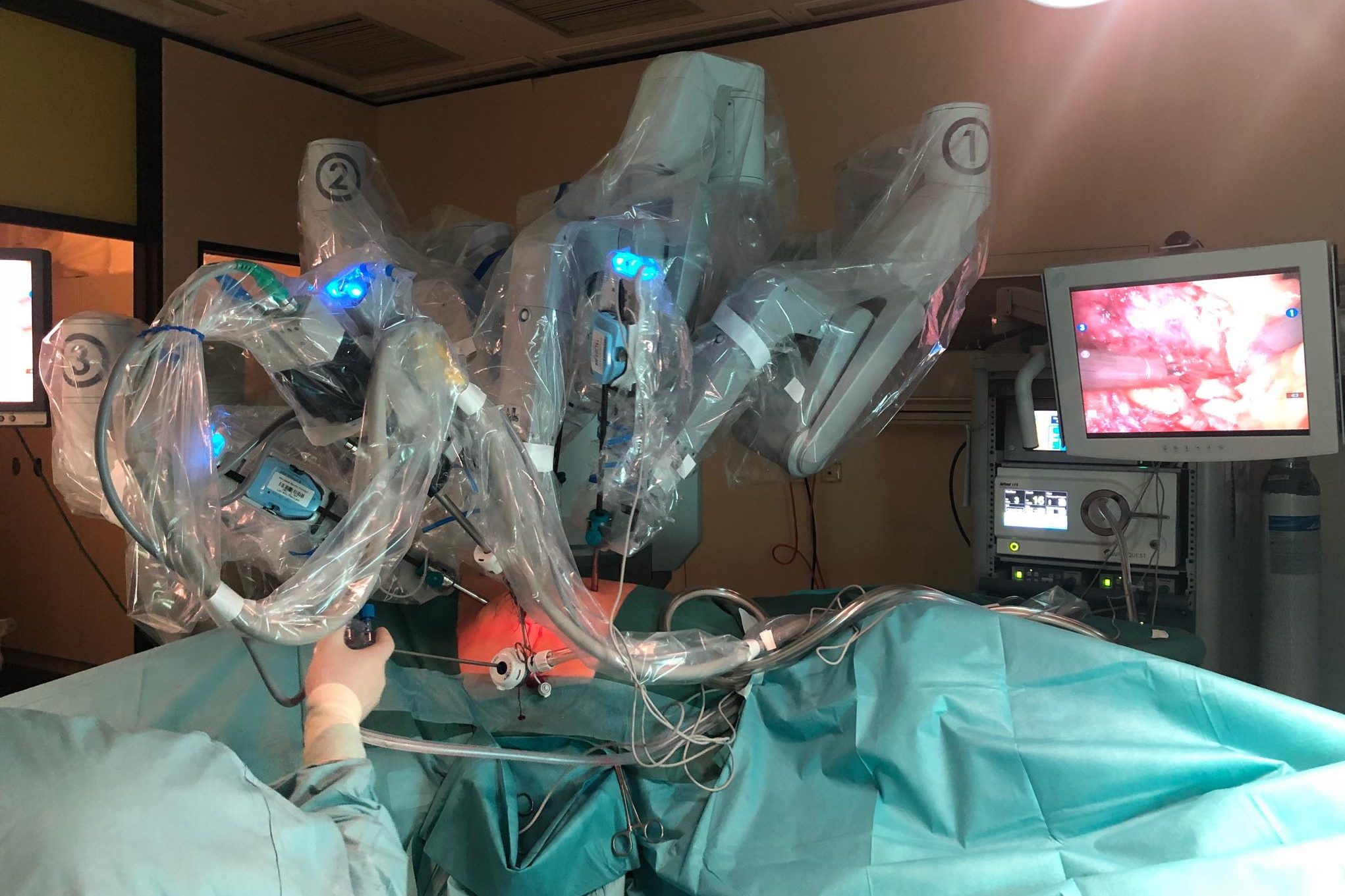

Instead of finalizing an audio product through studio speakers in a control room, AI mastering works entirely virtual. // Image: Sebastian Lesch

Benefits for users

Services like Landr embody time and cost saving alternatives to traditional workflows that usually include numerous manual tasks and expenses for professional services. Since its initial release in 2014, Landr has gained a considerable amount of popularity - boasting a number of two million tracks that where mastered using the their automatic self-learning tool.

As with most AI-solutions, users are offered convenient alternatives that work highly efficient in terms of time and personell expenditure. To draw a comparison, one could imagine a house painter meticulously painting a living room, but whose completion time takes as long as a cigarette break and costs the house owner the price equivalent of two cups of coffee.

Essential for machine learning: Data

AI technologies that apply machine learning are based on automatically created algorithms. This is achieved by training the artificial intelligence with large amounts of data. Generally, data is already available in vast quantities, but it is lacking structure. Structuring and making that information usable in a cost-effective way is one of the current tasks in research.

But collecting data during different parts of the musical workflow can be difficult. For example, generating an AI that is able to fully adjust and mix individual sound sources, like tracks of different instruments, would require access to the raw and disentangled tracks within music recordings. The challenge is that the mixing material is usually well protected.

Can artificial intelligence emulate creativity?

Concerning human approaches and solutions, Joshua Reiss, professor for Audio Engineering at the Centre for Digital Music at the Queen Mary University of London and initial co-founder of Landr states: "It is very difficult to understand the solutions and where they come from, so we may call them «creative» when more accurately they are «complex»."

The challenge of understanding creative and artistic decisions has been another limitation of AI for musical tasks. Artificial intelligence can offer solutions for complicated problems by exploring the acquired data, using pattern recognition and building neural pathways. But these are still merely a subset of the creative human touch. Reiss explains: "There are too many unknowns, too many subtle aspects, and the human element is far more adaptive."

In the field of music composition, AI is able to create pieces of music. But it merely comes down to imitating the musical styles that it has been trained by. // Image: Pixabay

Potential for future audio-applications

At the same time, possibilities for products with artificial intelligence are growing rapidly. Michael Stadtschnitzer of the Fraunhofer Institute for Intelligent Analysis and Information Systems (IAIS) refers to already available products in other areas: "Many applications that have been unthinkable not too many years ago, like digital home assistants for instance, have already entered the consumer market."

The department NetMedia at Fraunhofer IAIS is focusing on applied-oriented research that is accompanying audio-specific tasks for AI. The research includes an audio mining system, that utilizes pattern recognition in order to make media information like speech searchable, for example in radio and TV-content.

Reiss also sees a lot of opportunities for AI in music, as the potential is not fully explored yet. He compares it to the advances of visual processing in smartphones – where face, scene and motion detection, red eye removal, and autofocus are already standard in commercial products.

Catering to a growing audience

Already available AI-tools, like Landr, cater to a broad group of people, in this case offering an accessible solution to optimize audio-content. That contributes to an ongoing progress where trends like home recording and streaming are extending the music industry "from «upper circles» towards the masses", as Stefanie Acquavella-Rauch, spokesperson of the panel for digital musicology within the German musicology institution GfM, describes it.

As of now, AI and automation systems are mostly not intended to match the work of professionals, according to Reiss. However, automatic systems could reduce the time spent on repetitive tasks in music production, or they could be used in situations where a sound engineer is not available, like during a band practice, small pub-gigs or even sound design for video games.

Historical perspective: Closing in on a disruption?

Stefanie Acquavella-Rauch regards disruption – in the case of music – as a slow process that is mostly connected to a change of media. An example is the invention of recording and reproduction techniques like the phonograph cylinder in the late 19th century. Music was no longer passed on only by transcribing and playing it, but could be replayed.

With the recent growth of music streaming, the mass and availability of music is reaching a new level. According to IFPI’s Global Music Report 2018, it has increased globally by 41.1 percent and contributed to a digital share of the global music market revenue of 54 percent. That marks a huge change in the distribution of music. Acquavella-Rauch concludes: "Earlier, the availability of recorded music used to be confined. Whereas in the digital era, the availability is growing boundless."

Areas of AI application for music production

In mixing, artificial intelligence is already able to make pre-adjustments for sound-processing. // Image: Sebastian Lesch

Composition: There is a fair amount of projects that have taken on the task of creating an AI that is able to either assist in composing or even imitate certain composing styles. Deepbach is an example of AI composition, developed to mimic the style of Johann Sebastian Bach. Melodrive and Aiva are commercially available tools for AI-Scores. Sony CSL’s Flow Machines also invented a virtual musician called Skygge, which released an entire AI-composed album in January.

Mixing: Processing the sound of single audio tracks and finding a sound-balance between different sound sources is one of the main tasks in audio mixing. An example for available AI-applications in that field is the Track Assistant in iZotope’s software tool Neutron 2. This tool is able to detect instrument types and automatically chooses settings for the signal processing.

Mastering: Mastering is the last step of a musical production before pressing or digital distribution. It includes technical adjustments as well as a final aesthetic polish. Landr and Cloudbounce offer audio mastering, based on self-learning artificial intelligence. Landr has extended its services to digital distribution and sound sample libraries, while Cloudbounce is designed as a blockchain-driven, decentralized AI audio ecosystem. The corresponding white paper can be found here: www.cloudbounce.com/whitepaper.

Teaser Image: Sebastian Lesch

Author:

Sebastian Andreas Lesch